Face Tracking

Face Tracking detects and tracks the user’s face. With Zappar’s Face Tracking library, you can attach 3D objects to the face itself, or render a 3D mesh that fits to, and deforms with, the face as the user moves and changes their expression. You could build face-filter experiences to allow users to try on different virtual sunglasses, for example, or to simulate face paint.

Before setting up face tracking, you must first add or replace any existing camera you have in your scene. Find out more here.

To place content on or around a user’s face, create a new FaceTracker object when your page loads:

const faceTracker = new ZapparBabylon.FaceTracker();Model File

Section titled “Model File”The face tracking algorithm requires a model file of data in order to operate - you can call loadDefaultModel() to load the one that’s included by default with the library. The function returns a promise that resolves when the model has been loaded successfully.

const faceTracker = new ZapparBabylon.FaceTracker();faceTracker.loadDefaultModel().then(() => { // The model has been loaded successfully});Alternatively, the library provides a loader for loading a tracker and model file:

const faceTracker = new ZapparBabylon.FaceTrackerLoader().load();Face Anchors

Section titled “Face Anchors”Each FaceTracker exposes anchors for faces detected and tracked in the camera view. By default a maximum of one (1) face is tracked at a time, however, you can change this using the maxFaces parameter:

faceTracker.maxFaces = 2;Note that setting a value of two (2) or more faces may impact the performance and frame rate of the library. We recommend sticking with the default value of one, unless your use case requires tracking multiple faces.

Anchors have the following parameters:

| Parameter | Description |

|---|---|

id | a string that’s unique for this anchor |

visible | a boolean indicating if this anchor is visible in the current camera frame |

identity and expression | Float32Arrays containing data used for rendering a face-fitting mesh (see below) |

onVisible | Event handler that emits when the anchor becomes visible. This event is emitted during your call to camera.updateFrame() |

onNotVisible | Event handler that emits when the anchor disappears in the camera view. This event is emitted during your call to camera.updateFrame() |

You can access the anchors of a tracker using its anchors parameter - it’s a JavaScript Map keyed with the IDs of the anchors. Trackers will reuse existing non-visible anchors for new faces that appear and thus there are never more than maxFaces anchors handled by a given tracker. Each tracker also exposes a JavaScript Set of anchors visible in the current camera frame as its visible parameter.

Attaching 3D content to a face

Section titled “Attaching 3D content to a face”To attach 3D content (e.g. Babylon.js objects or models) to a FaceTracker or a FaceAnchor, the library provides the FaceAnchorTransformNode. This is a Babylon.js TransformNode that will follow the supplied anchor (or, in the case of a supplied FaceTracker, the anchor most recently visible in that tracker) in the 3D view:

const faceAnchorTransformNode = new ZapparBabylon.FaceAnchorTransformNode('tracker', camera, faceTracker, scene);

// Child any 3D objects you'd like to track to this facemyModel.parent = faceAnchorTransformNode;The TransformNode provides a coordinate system that has its origin at the center of the head, with a positive X axis to the right, the positive Y axis towards the top and the positive Z axis coming forward out of the user’s head.

Note that users typically expect to see a mirrored view of any user-facing camera feed. For more information, please see the section on mirroring the camera.

Events

Section titled “Events”In addition to using the anchors and visible parameters, FaceTrackers expose event handlers that you can use to be notified of changes in the anchors or their visibility. The events are emitted during your call to camera.updateFrame(renderer).

| Event | Description |

|---|---|

onNewAnchor | emitted when a new anchor is created by the tracker |

onVisible | emitted when an anchor becomes visible in a camera frame |

onNotVisible | emitted when an anchor goes from being visible in the previous camera frame, to being not visible in the current frame |

Here is an example of using these events:

faceTracker.onNewAnchor.bind(anchor => { console.log("New anchor has appeared:", anchor.id);

// You may like to create a new FaceAnchorTransformNode here for this anchor, and add it to your scene});

faceTracker.onVisible.bind(anchor => { console.log("Anchor is visible:", anchor.id);});

faceTracker.onNotVisible.bind(anchor => { console.log("Anchor is not visible:", anchor.id);});Face Landmarks

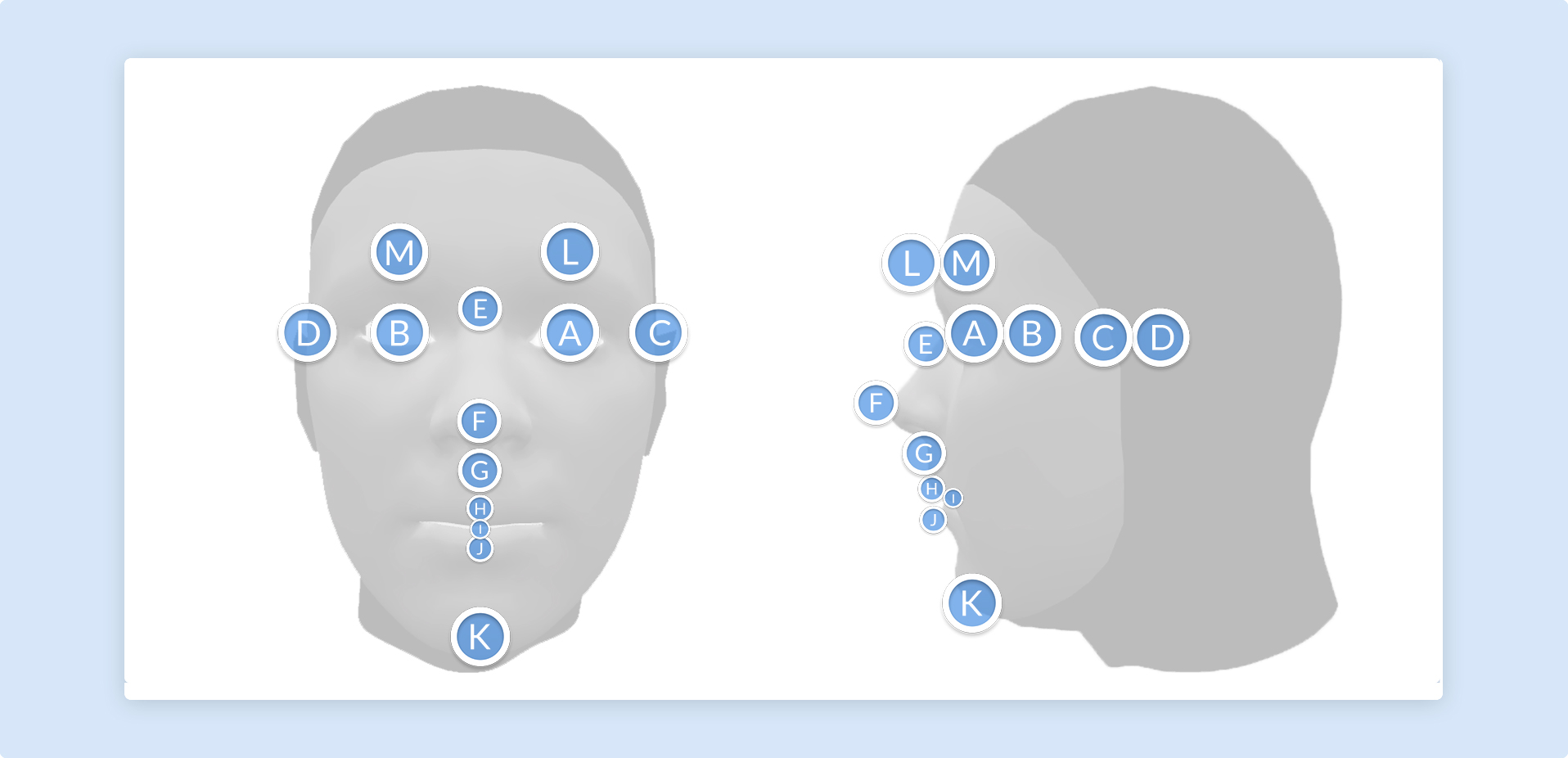

Section titled “Face Landmarks”In addition to tracking the center of the head, you can use FaceAnchorTransformNode to track content from various points on the user’s face. These landmarks will remain accurate, even as the user’s expression changes.

To track a landmark, construct a new FaceAnchorTransformNode object; passing your camera, face tracker, and the name of the landmark you’d like to track:

const faceAnchorTransformNode = new ZapparBabylon.FaceAnchorTransformNode('tracker', camera, faceTracker, ZapparBabylon.FaceLandmarkName.CHIN, scene);

// Child any 3D objects you'd like to track to this facemyModel.parent = faceAnchorTransformNode;The following landmarks are available:

| Face Landmark | Diagram ID |

|---|---|

| EYE_LEFT | A |

| EYE_RIGHT | B |

| EAR_LEFT | C |

| EAR_RIGHT | D |

| NOSE_BRIDGE | E |

| NOSE_TIP | F |

| NODE_BASE | G |

| LIP_TOP | H |

| MOUTH_CENTER | I |

| LIP_BOTTOM | J |

| CHIN | K |

| EYEBROW_LEFT | L |

| EYEBROW_RIGHT | M |

Note that ‘left’ and ‘right’ here are from the user’s perspective.

Face Mesh

Section titled “Face Mesh”In addition to tracking the center of the face using FaceTracker, the Zappar library provides a number of meshes that will fit to the face/head and deform as the user’s expression changes. These can be used to apply a texture to the user’s skin, much like face paint, or to mask out the back of 3D models so the user’s head is not occluded where it shouldn’t be.

To use the face mesh, first construct a new FaceMesh object and load its data file. The loadDefaultFace function returns a promise that resolves when the data file has been loaded successfully. You may wish to use to show a loading screen to the user while this is taking place.

const faceMesh = new ZapparBabylon.FaceMesh();faceMesh.loadDefaultFace().then(() => { // Face mesh loaded});Alternatively, the library provides a loader for loading face mesh and data file:

const faceMesh = new ZapparBabylon.FaceMeshLoader().loadFace();While the faceMesh object lets you access the raw vertex, UV, normal and indices data for the face mesh, you may wish to use the library’s FaceMeshGeometry object which wraps the data as a Babylon.js Mesh. This Mesh object must still be a child of a FaceAnchorTransformNode to appear in the correct place on-screen:

const faceTracker = new ZapparBabylon.FaceTrackerLoader().load();const trackerTransformNode = new ZapparBabylon.FaceTrackerTransformNode('tracker', camera, faceTracker, scene);

const material = new BABYLON.StandardMaterial('mat', scene);material.diffuseTexture = new BABYLON.Texture(faceMeshTexture, scene);

const faceMesh = new ZapparBabylon.FaceMeshGeometry('face mesh', scene);faceMesh.parent = trackerTransformNode;faceMesh.material = material;

targetPlane.parent = trackerTransformNode;Each frame, after camera.updateFrame(), call one of the following functions to update the face mesh to the most recent identity and expression output from a face anchor:

// Update directly from a FaceAnchorTransformNodefaceMesh.updateFromFaceAnchorTransformNode(faceAnchorTransformNode);

// Update from a face anchorfaceMesh.updateFromFaceAnchor(myFaceAnchor);At this time, there are two meshes included with the library.

Default mesh: The default mesh covers the user’s face, from the chin at the bottom to the forehead, and from the sideburns on each side. There are optional parameters that determine if the mouth and eyes are filled or not:

loadDefaultFace(fillMouth?: boolean, fillEyeLeft?: boolean, fillEyeRight?: boolean)Full head simplified mesh: The full head simplified mesh covers the whole of the user’s head, including a portion of the neck. It’s ideal for drawing into the depth buffer in order to mask out the back of 3D models placed on the user’s head (see Head Masking below). There are optional parameters that determine if the mouth, eyes and neck are filled or not:

loadDefaultFullHeadSimplified(fillMouth?: boolean, fillEyeLeft?: boolean, fillEyeRight?: boolean, fillNeck?: boolean)Head Masking

Section titled “Head Masking”If you’re placing a 3D model around the user’s head, such as a helmet, it’s important to make sure the camera view of the user’s real face is not hidden by the back of the model. To achieve this, the library provides ZapparBabylon.HeadMaskMesh. It’s a BABYLON.Mesh that fits the user’s head and fills the depth buffer, ensuring that the camera image shows instead of any 3D elements behind it in the scene.

To use it, construct the object using a ZapparBabylon.HeadMaskMeshLoader and add it to your face anchor TransformNode:

const mask = new ZapparBabylon.HeadMaskMeshLoader('mask mesh').load();faceAnchorTransformNode.add(mask);Then, in each frame after camera.updateFrame(), call one of the following functions to update the head mesh to the most recent identity and expression output from a face anchor:

// Update directly from a FaceAnchorTransformNodemask.updateFromFaceAnchorTransformNode(faceAnchorTransformNode);

// Update from a face anchormask.updateFromFaceAnchor(myFaceAnchor);Behind the scenes, the

HeadMaskMeshworks using a full-headZapparBabylon.FaceMeshwith the mouth, eyes and neck filled in. Itsmaterial.disableColorWriteis set totrueso it fills the depth buffer but not the color buffer.