Face Tracking

Face Tracking detects and tracks the user’s face. With Zappar’s Face Tracking library, you can attach 3D objects to the face itself, making it ideal for experiences where users try on AR hats or glasses for example. You can also render a 3D mesh that fits to and deforms with the face, adjusting as the user moves and changes their expression. This is great for virtual face-paint experiences.

Using the Template

Section titled “Using the Template”Dragging the Face Tracker template into your hierarchy allows you to place 3D content that’s tracked to the user’s head. Once the Template is in placed in your hierarchy, place 3D objects as children of that template for them to be tracked from the center of the user’s head.

Head Model

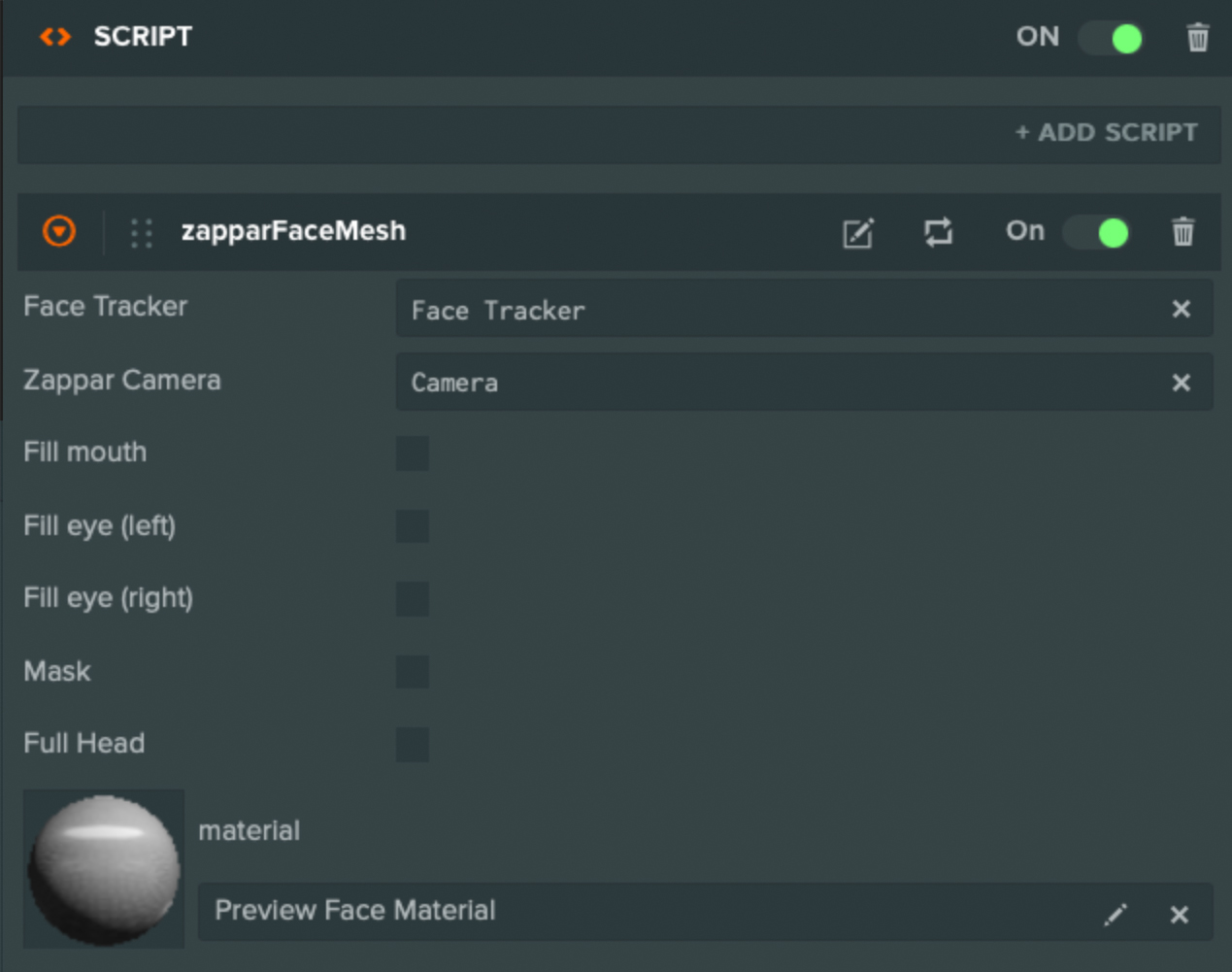

Section titled “Head Model”The Face Mesh provides a 3D mesh that fits with the user’s face as their expression changes and their head moves. It exposes a Face Material parameter that can be set to any valid material (a UV map is provided that aids in development). Each of the Fill options determine whether or not the relevant portion of the mesh is ‘filled’ when it is rendered.

A Zappar Face Mesh requires a Zappar Face Tracker instance as its Face Tracker property (as an attribute). The Face Mesh can appear anywhere in the scene hierarchy, however, you should place it as a child of the Face Tracker if you wish for the mesh to appear correctly attached to the face.

The face mesh fires an event when it has loaded. You may listen for it like this:

this.app.on('zappar:face_mesh', (ev) => { if(ev.message === 'mesh_loaded'){ // Mesh has loaded. }});Head Masking

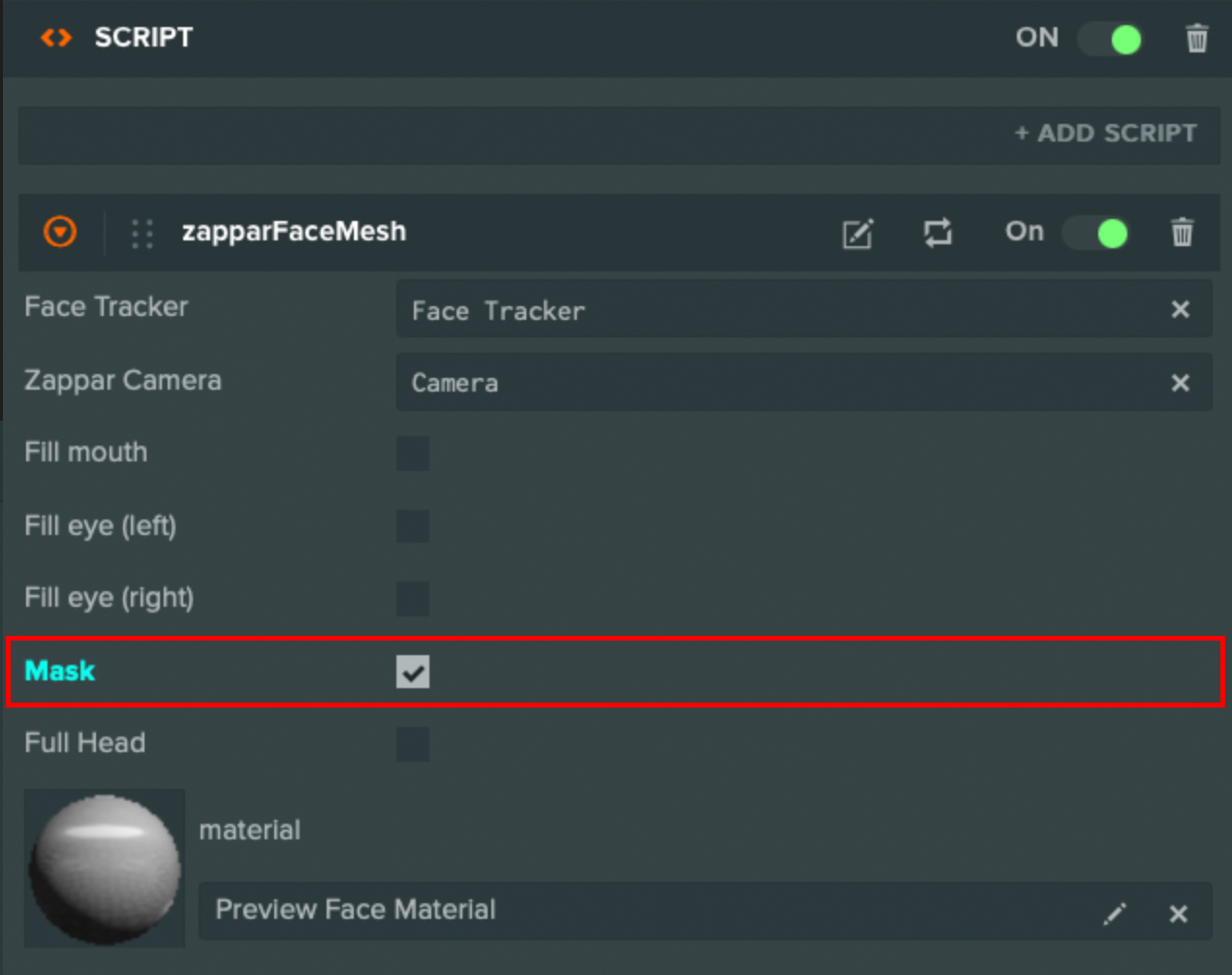

Section titled “Head Masking”If you’re placing a 3D model around the user’s head, such as a helmet, it’s important to make sure the camera view of the user’s real face is not hidden by the back of the model. To achieve this, the head mesh provides a maskattribute. It fills the depth buffer but not the color buffer.

Face Landmarks

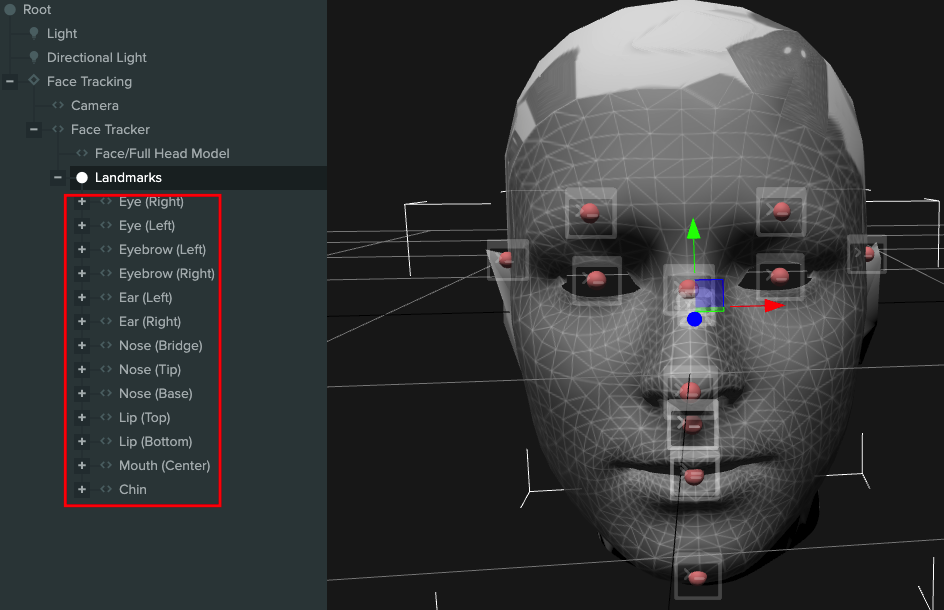

Section titled “Face Landmarks”In addition to tracking the center of the head, you can use FaceLandmarks to track content from various points on the user’s face. These landmarks will remain accurate, even as the user’s expression changes.

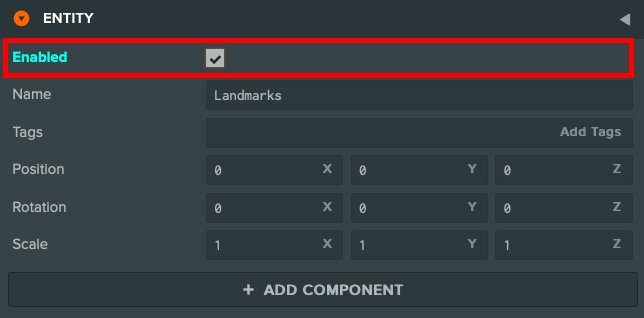

To track a landmark, simply enable the face landmarks in the hierarchy and child any content to the required landmark.

Events

Section titled “Events”Events are fired from this.app. You may listen for face tracker events like this:

this.app.on('zappar:face_tracker', (ev) => {});The callback object contains a message property which stores the event type. The following event messages are available:

this.app.on('zappar:face_tracker', (ev) => {

switch(ev.message){ /** * Fired when the face tracking model is loaded. */ case 'model_loaded': break;

/** * Fired when a new anchor is created by the tracker. */ case 'new_anchor': console.log(ev.anchor); break;

/** * Fired when an anchor becomes visible in a camera frame. */ case 'anchor_visible': console.log(ev.anchor); break;

/** * Fired when an anchor goes from being visible in the previous camera frame, * to not being visible in the current frame. */ case 'anchor_not_visible': console.log(ev.anchor); break; }

});